Configuration

Although AWS Glue 1.0 and 2.0 have different dependencies and versions, the Python library (aws-glue-libs) shares the same branch (glue-1.0) and Spark version. On the other hand, AWS Glue 2.0 supports Python 3.7 and has different default python packages. Therefore, in order to set up a AWS Glue 2.0 development environment, it would be necessary to install Python 3.7 and the default packages while sharing the same Spark-related dependencies.

The Visual Studio Code Remote – Containers extension lets you use a Docker container as a full-featured development environment. It allows you to open any folder or repository inside a container and take advantage of Visual Studio Code’s full feature set. The development container configuration(devcontainer.json) and associating files can be found in the devcontainer folder of the GitHub repository for this post. Apart from the configuration file, the folder includes a Dockerfile, files to keep Python packages to install and a custom Pytest executable for AWS Glue 2.0 gluepytest2– this executable will be explained later.

| . ├── .devcontainer │ ├── 3.6 │ │ └── dev.txt │ ├── 3.7 │ │ ├── default.txt │ │ └── dev.txt │ ├── Dockerfile │ ├── bin │ │ └── gluepytest2 │ └── devcontainer.json ├── .gitignore ├── README.md ├── example.py ├── execute.sh ├── src │ └── utils.py └── tests ├── __init__.py ├── conftest.py └── test_utils.py |

Dockerfile

The Docker image (amazon/aws-glue-libs:glue_libs_1.0.0_image_01) runs as the root user and it is not convenient to write code with it. Therefore a non-root user is created whose user name corresponds to the logged-in user’s user name – the USERNAME argument will be set accordingly in devcontainer.json. Next the sudo program is added in order to install other programs if necessary. More importantly, the Python Glue library’s executables are configured to run with the root user so that the sudo program is necessary to run those executables. Then the 3rd-party Python packages are installed for the Glue 1.0 and 2.0 development environments. Note that a virtual environment is created for the latter and the default Python packages and additional development packages are installed in it. Finally a Pytest executable is copied to the Python Glue library’s executable path. It is because the Pytest path is hard-coded in the existing executable (gluepytest) and I just wanted to run test cases in the Glue 2.0 environment without touching existing ones – the Pytest path is set to /root/venv/bin/pytest in gluepytest2.

## .devcontainer/Dockerfile FROM amazon/aws-glue-libs:glue_libs_1.0.0_image_01 |

Container Configuration

The development container will be created by building an image from the Dockerfile illustrated above. The logged-in user’s user name is provided to create a non-root user and the container is set to run as the user as well. And 2 visual studio code extensions are installed – Python and Prettier. Also the current folder is mounted to the container’s workspace folder and 2 additional folders are mounted – they are to share AWS credentials and SSH keys. Note that AWS credentials are mounted to /roo/.aws because the Python Glue library’s executables will be run as the root user. Then the port 4040 is set to be forwarded, which is used for the Spark UI. Finally additional editor settings are added at the end.

// .devcontainer/devcontainer.json { “source=${localEnv:HOME}/.aws,target=/root/.aws,type=bind,consistency=cached”, “source=${localEnv:HOME}/.ssh,target=${localEnv:HOME}/.ssh,type=bind,consistency=cached” |

Launch Container

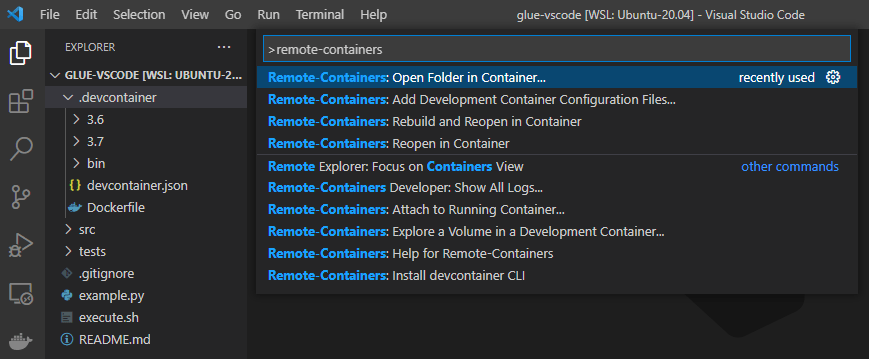

The development container can be run by executing the following command in the command palette.

Remote-Containers: Open Folder in Container…

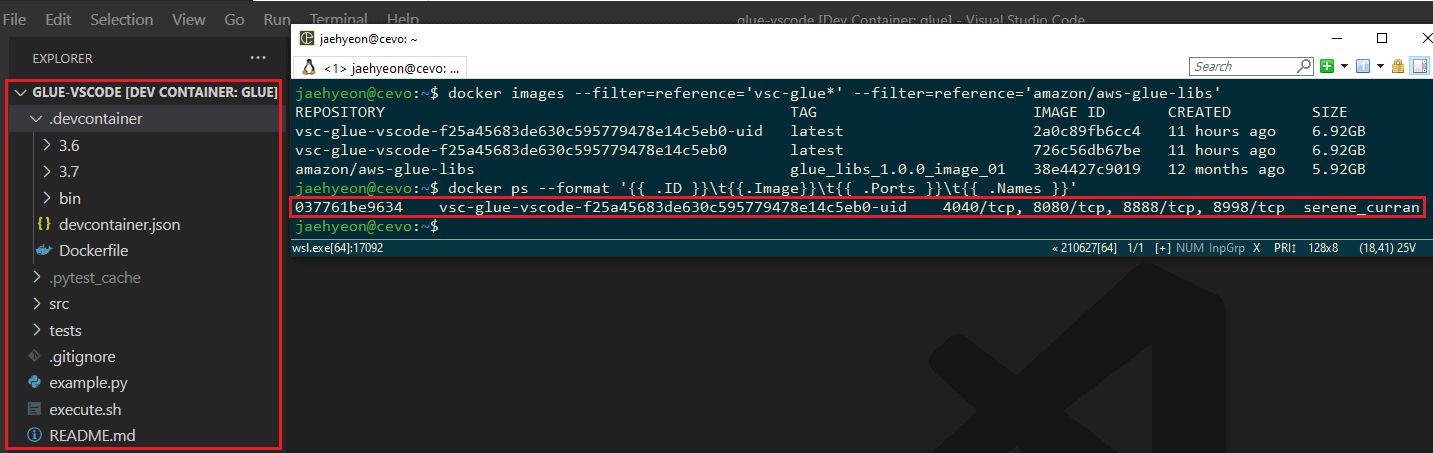

Once the development container is ready, the workspace folder will be open within the container. You will see 2 new images are created from the base Glue image and a container is run from the latest image.

Examples

I’ve created a simple script (execute.sh) to run the executables easily. The main command indicates which executable to run and possible values are pyspark, spark-submit and pytest. Note that the IPython notebook is available but it is not added because I don’t think a notebook is good for development. However you may try by just adding Below shows some example commands.

# pyspark |

# ./execute.sh #!/usr/bin/env bash

## configure python runtime if [ “$version“ == “1” ]; then pyspark_python=python elif [ “$version“ == “2” ]; then pyspark_python=/root/venv/bin/python else echo “unsupported version – $version, only 1 or 2 is accepted” exit 1 fi echo “pyspark python – $pyspark_python“

execution=$1 echo “execution type – $execution“

## remove first argument shift 1 echo $@

## set up command if [ $execution == ‘pyspark’ ]; then sudo su -c “PYSPARK_PYTHON=$pyspark_python /home/aws-glue-libs/bin/gluepyspark” elif [ $execution == ‘spark-submit’ ]; then sudo su -c “PYSPARK_PYTHON=$pyspark_python /home/aws-glue-libs/bin/gluesparksubmit $@“ elif [ $execution == ‘pytest’ ]; then if [ $version == “1” ]; then sudo su -c “PYSPARK_PYTHON=$pyspark_python /home/aws-glue-libs/bin/gluepytest $@“ else sudo su -c “PYSPARK_PYTHON=$pyspark_python /home/aws-glue-libs/bin/gluepytest2 $@“ fi else echo “unsupported execution type – $execution“ exit 1 fi |

Pyspark

Using the script above, we can launch the PySpark shells for each of the environments. Python 3.6.10 is associated with the AWS Glue 1.0 while Python 3.7.3 in a virtual environment is with the AWS Glue 2.0.

Spark Submit

Below shows one of the Python samples in the Glue documentation. It pulls 3 data sets from a database called legislators. Then they are joined to create a history data set (l_history) and saved into S3.

# ./example.py from awsglue.dynamicframe import DynamicFrame |

When the execution completes, we can see the joined data set is stored as a parquet file in the output S3 bucket.

Note that we can monitor and inspect Spark job executions in the Spark UI on port 4040.

Pytest

We can test a function that deals with a DynamicFrame. Below shows a test case for a simple function that filters a DynamicFrame based on a column value.

# ./src/utils.py # ./tests/conftest.py |

Conclusion

In this post, I demonstrated how to build local development environments for AWS Glue 1.0 and 2.0 using Docker and the Visual Studio Code Remote – Containers extension. Then examples of launching Pyspark shells, submitting an application and running a test are shown. I hope this post is useful to develop and test Glue ETL scripts locally.