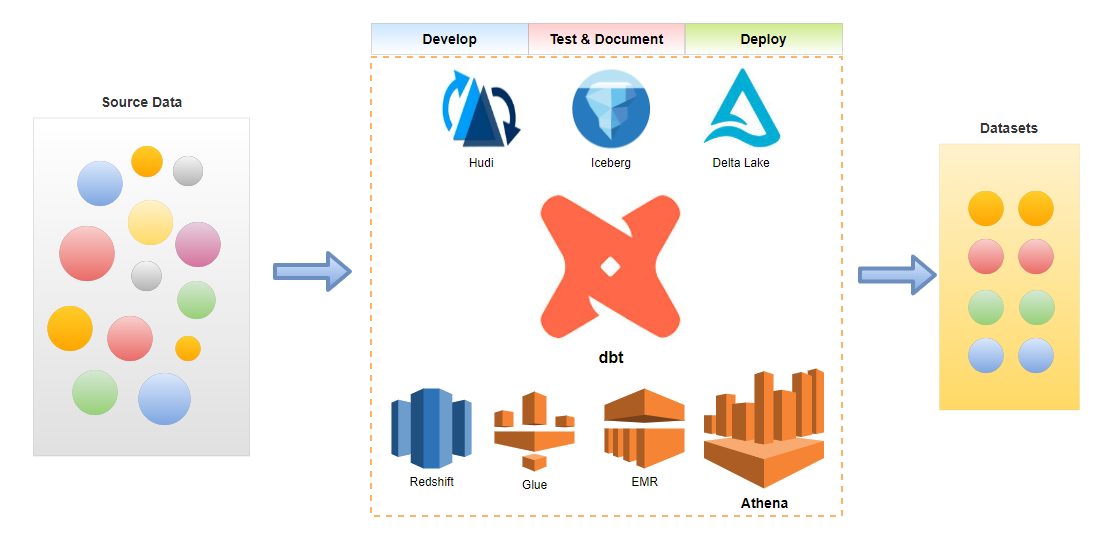

The data build tool (dbt) is an effective data transformation tool and it supports key AWS analytics services – Redshift, Glue, EMR and Athena. In the previous posts, we discussed benefits of a common data transformation tool and the potential of dbt to cover a wide range of data projects from data warehousing to data lake to data lakehouse. Demo data projects that target Redshift Serverless, Glue, EMR on EC2 and EMR on EKS are illustrated as well. In the last part of the dbt on AWS series, we discuss data transformation pipelines using dbt on Amazon Athena. Subsets of IMDb data are used as source and data models are developed in multiple layers according to the dbt best practices. A list of posts of this series can be found below.

Part 5 – Athena (this post)

Below shows an overview diagram of the scope of this dbt on AWS series. Athena is highlighted as it is discussed in this post.

Infrastructure

The infrastructure hosting this solution leverages Athena Workgroup, AWS Glue Data Catalog, AWS Glue Crawlers and a S3 bucket. They are deployed using Terraform and the source can be found in the GitHub repository of this post.

Athena Workgroup

The dbt athena adapter requires an Athena workgroup and it only supports the Athena engine version 2. The workgroup used in the dbt project is created as shown below.

resource “aws_athena_workgroup” “imdb” { |

Glue Databases

We have two Glue databases. The source tables and the tables of the staging and intermediate layers are kept in the imdb database. The tables of the marts layer are stored in the imdb_analytics database.

# athena/infra/main.tf |

Glue Crawlers

We use Glue crawlers to create source tables in the imdb database. We can create a single crawler for the seven source tables but it was not satisfactory, especially header detection. Instead a dedicated crawler is created for each of the tables with its own custom classifier where it includes header columns specifically. The Terraform count meta-argument is used to create the crawlers and classifiers recursively.

# athena/infra/main.tf # athena/infra/variables.tf |

Project

We build a data transformation pipeline using subsets of IMDb data – seven titles and names related datasets are provided as gzipped, tab-separated-values (TSV) formatted files. This results in three tables that can be used for reporting and analysis.

Save Data to S3

The Axel download accelerator is used to download the data files locally followed by decompressing with the gzip utility. Note that simple retry logic is added as I see download failure from time to time. Finally the decompressed files are saved into the project S3 bucket using the S3 sync command.

# athena/upload-data.sh |

Run Glue Crawlers

The Glue crawlers for the seven source tables are executed as shown below.

# athena/start-crawlers.sh |

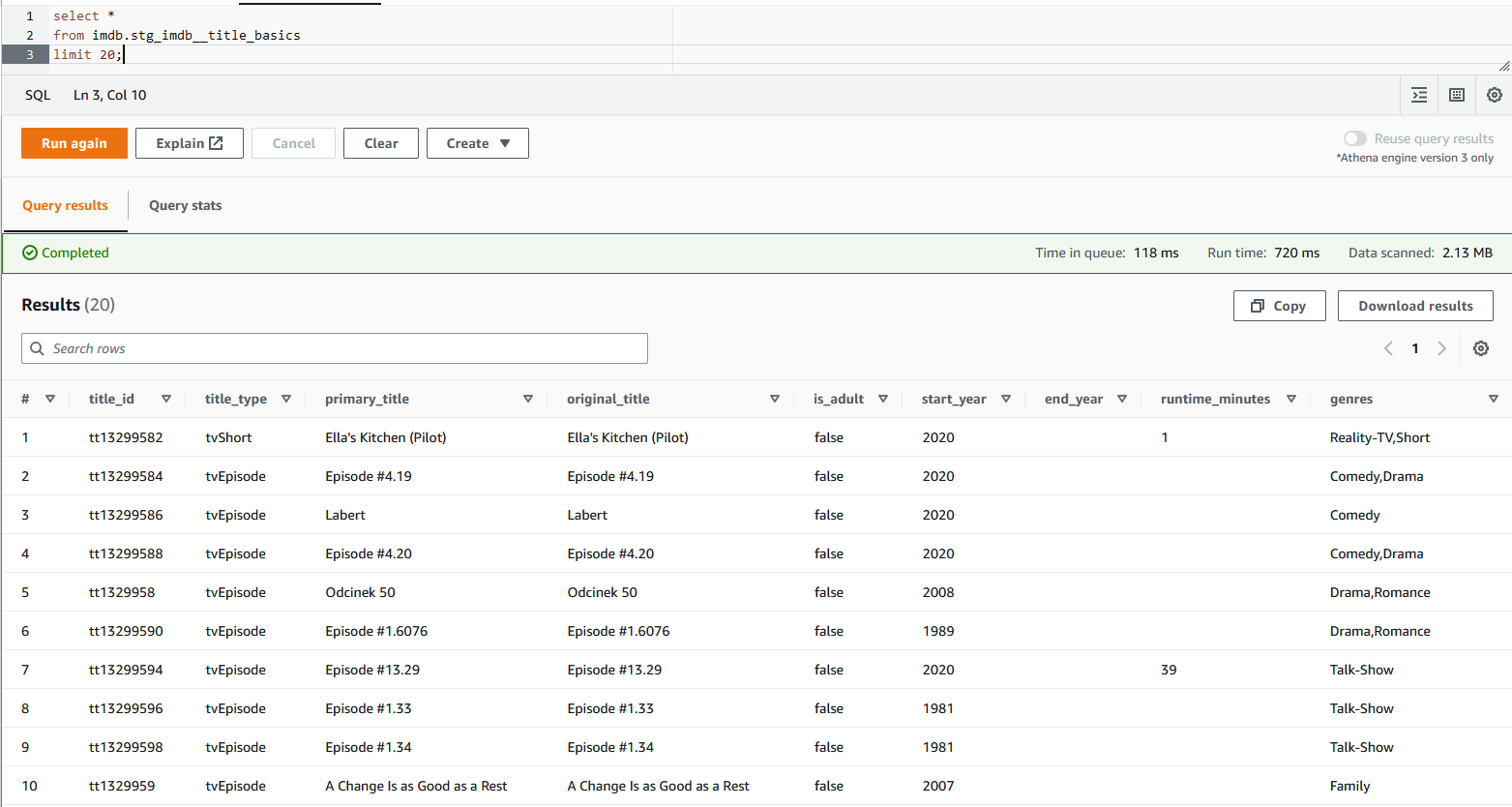

After the crawlers run successfully, we are able to check the seven source tables. Below shows a query example of one of the source tables in Athena.

Setup dbt Project

We need the dbt-core and dbt-athena-adapter packages for the main data transformation project – the former can be installed as dependency of the latter. The dbt project is initialised while skipping the connection profile as it does not allow to select key connection details such as aws_profile_name and threads. Instead the profile is created manually as shown below. Note that, after this post was complete, it announced a new project repository called dbt-athena-community and new features are planned to be supported in the new project. Those new features cover the Athena engine version 3 and Apache Iceberg support and a new dbt targeting Athena is encouraged to use it.

$ pip install dbt-athena-adapter |

The attributes are self-explanatory and their details can be checked further in the GitHub repository of the dbt-athena adapter.

# athena/set-profile.sh #!/usr/bin/env bash |

dbt initialises a project in a folder that matches to the project name and generates project boilerplate as shown below. Some of the main objects are dbt_project.yml, and the model folder. The former is required because dbt doesn’t know if a folder is a dbt project without it. Also it contains information that tells dbt how to operate on the project. The latter is for including dbt models, which is basically a set of SQL select statements. See dbt documentation for more details.

$ tree athena/athena_proj/ -L 1 |

We can check Athena connection with the dbt debug command as shown below.

$ dbt debug |

After initialisation, the model configuration is updated. The project materialisation is specified as view although it is the default materialisation. Also tags are added to the entire model folder as well as folders of specific layers – staging, intermediate and marts. As shown below, tags can simplify model execution.

# athena/athena_proj/dbt_project.yml |

The dbt_utils package is installed for adding tests to the final marts models. The packages can be installed by the dbt deps command.

# athena/athena_proj/packages.yml |

Create dbt Models

The models for this post are organised into three layers according to the dbt best practices – staging, intermediate and marts.

Staging

The seven tables that are loaded from S3 are dbt source tables and their details are declared in a YAML file (_imdb_sources.yml). By doing so, we are able to refer to the source tables with the {{ source() }} function. Also we can add tests to source tables. For example below two tests (unique, not_null) are added to the tconst column of the title_basics table below and these tests can be executed by the dbt test command.

# athena/athena_proj/models/staging/imdb/_imdb__sources.yml … |

Based on the source tables, staging models are created. They are created as views, which is the project’s default materialisation. In the SQL statements, column names and data types are modified mainly.

# athena/athena_proj/models/staging/imdb/stg_imdb__title_basics.sql with source as ( |

Below shows the file tree of the staging models. The staging models can be executed using the dbt run command. As we’ve added tags to the staging layer models, we can limit to execute only this layer by dbt run –select staging.

$ tree athena/athena_proj/models/staging/ |

The views in the staging layer can be queried in Athena as shown below.

Intermediate

We can keep intermediate results in this layer so that the models of the final marts layer can be simplified. The source data includes columns where array values are kept as comma separated strings. For example, the genres column of the stg_imdb__title_basics model includes up to three genre values as shown in the previous screenshot. A total of seven columns in three models are columns of comma-separated strings and it is better to flatten them in the intermediate layer. Also, in order to avoid repetition, a dbt macro (flatten_fields) is created to share the column-flattening logic.

# athena/athena_proj/macros/flatten_fields.sql {% macro flatten_fields(model, field_name, id_field_name) %} |

The macro function can be added inside a common table expression (CTE) by specifying the relevant model, field name to flatten and id field name.

— athena/athena_proj/models/intermediate/title/int_genres_flattened_from_title_basics.sql with flattened as ( |

The intermediate models are also materialised as views and we can check the array columns are flattened as expected.

Below shows the file tree of the intermediate models. Similar to the staging models, the intermediate models can be executed by dbt run –select intermediate.

$ tree athena/athena_proj/models/intermediate/ athena/athena_proj/macros/ |

Marts

The models in the marts layer are configured to be materialised as tables in a custom schema. Their materialisation is set to table and the custom schema is specified as analytics while taking parquet as the file format. Note that the custom schema name becomes imdb_analytics according to the naming convention of dbt custom schemas. Models of both the staging and intermediate layers are used to create final models to be used for reporting and analytics.

— athena/athena_proj/models/marts/analytics/titles.sql {{ |

The details of the three models can be found in a YAML file (_analytics__models.yml). We can add tests to models and below we see tests of row count matching to their corresponding staging models.

# athena/athena_proj/models/marts/analytics/_analytics__models.yml version: 2 |

The models of the marts layer can be tested using the dbt test command as shown below.

$ dbt test –select marts |

Below shows the file tree of the marts models. As with the other layers, the marts models can be executed by dbt run –select marts.

$ tree athena/athena_proj/models/marts/ |

Build Dashboard

The models of the marts layer can be consumed by external tools such as Amazon QuickSight. Below shows an example dashboard. The pie chart on the left shows the proportion of titles by genre while the box plot on the right shows the dispersion of average rating by start year.

Generate dbt Documentation

A nice feature of dbt is documentation. It provides information about the project and the data warehouse and it facilitates consumers as well as other developers to discover and understand the datasets better. We can generate the project documents and start a document server as shown below.

$ dbt docs generate |

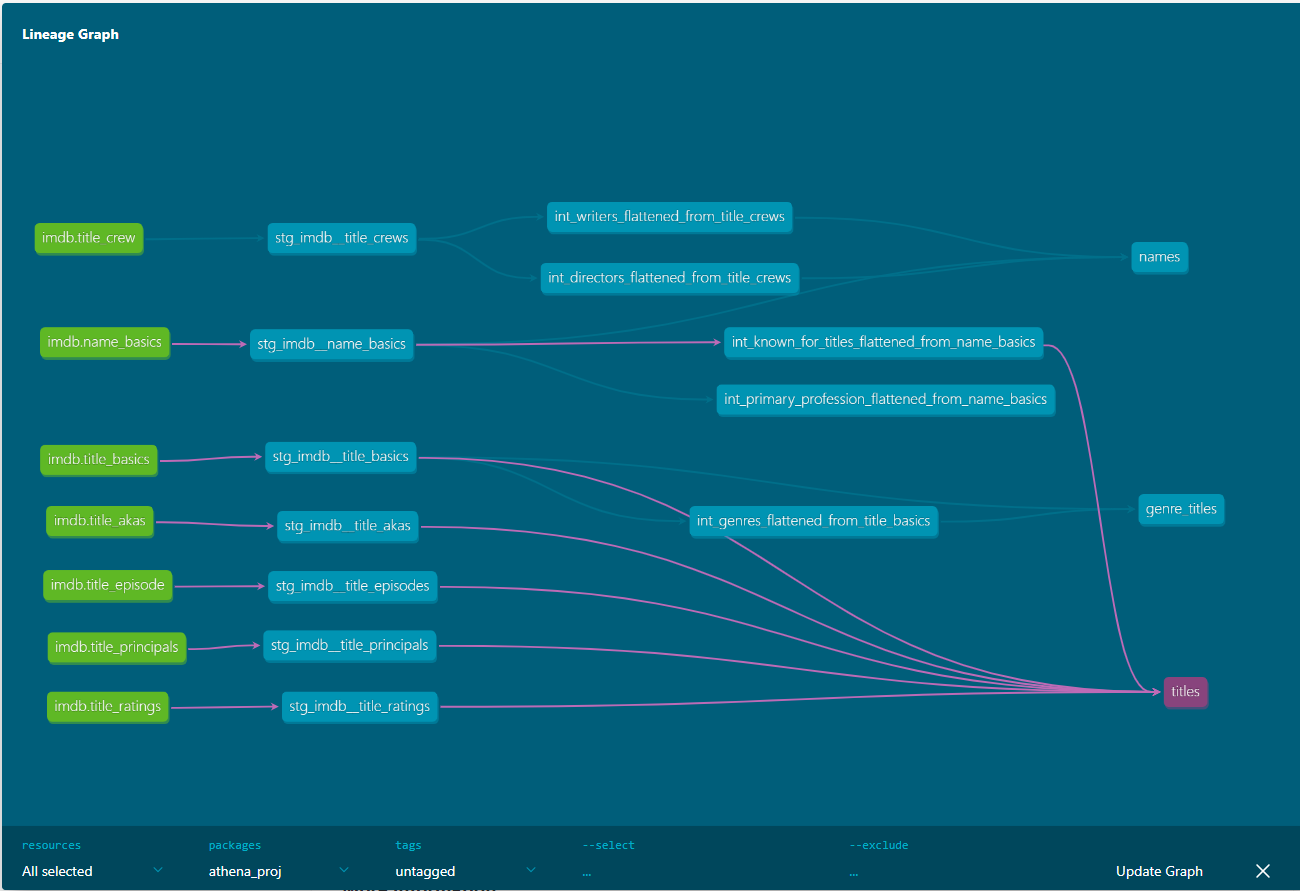

A very useful element of dbt documentation is data lineage, which provides an overall view about how data is transformed and consumed. Below we can see that the final titles model consumes all title-related stating models and an intermediate model from the name basics staging model.

Summary

In this post, we discussed how to build data transformation pipelines using dbt on AWS Athena. Subsets of IMDb data are used as source and data models are developed in multiple layers according to the dbt best practices. dbt can be used as an effective tool for data transformation in a wide range of data projects from data warehousing to data lake to data lakehouse and it supports key AWS analytics services – Redshift, Glue, EMR and Athena. In this series, we mainly focus on how to develop a data project with dbt targeting variable AWS analytics services. It is quite an effective framework for data transformation and advanced features will be covered in a new series of posts.