INTRODUCTION

Managing databases in a traditional on-prem datacentre setting, like many other activities, changes a lot as we migrate systems to the cloud. Along with the data and app, we must also migrate our operations as well, and these will look somewhat different in the cloud.

There are plenty of benefits in moving your on-prem databases into a managed database in the public cloud (such as AWS RDS). These include cost savings, scale, flexibility and security. However, some of the benefits may be at risk if the operating model is not ‘migrated’ along with the databases. This is especially the case in highly regulated industries where traditional practices from the on-premises world may hinder what can be achieved in the cloud, and may also open you up to loss of data through misconfiguration being exploited or intentional data exfiltration.

Establishing control over who can change database configuration and handle the organisation’s data, and having detective controls built around this, are the first line of defence.

The model you run on-prem may range from zero automation to database lifecycles that are completely aligned to your CI/CD capability, but hopefully there’s something for everyone in this topic as we’re going to lay out a plan for migrating database operations to the cloud.

GETTING STARTED

We can tackle this problem in four steps:

1. Understand your governance and security model, and how you may need to evidence it

2. Identify your database teams and stakeholders, and craft the user stories

3. Align the user stories with privileges and construct the access model

4. Implement the model

1 – UNDERSTAND YOUR GOVERNANCE AND SECURITY MODEL, AND HOW YOU MAY NEED TO EVIDENCE IT

Depending on which industry your organisation is in will influence how much work you may need to do both in setting up your access model and what is involved in operating it.

Those of you in large FSIs and large companies that fall under SOX will be familiar with audits for access and authorisation controls and these are a real hot button topic when apps and data are in the public cloud.

For everyone else, there’s still a lot of value in implementing strong controls around access as this can then help enforce good practice in the cloud.

There are some new and heightened risks to your organisation’s information assets when moving to the cloud, and we can focus on a couple of controls to address these risks based on a strong access and authorisation model as a frontline defence.

The preventative controls here are primarily IAM based; you take the keys away to make unrestricted changes and compel the platform operators to push changes through source control management and a deployment pipeline so that all the right controls are applied to change.

Control 1 – Manage configuration and prevent drift: lower the risk of bad config and inconsistent environments that are hard to support and may lead to security vulnerabilities (exposed endpoints etc.). The greater the consistency of the environment through infrastructure as code and preventing manual changes means that your detective controls (such as AWS Config or third-party tools such as Cloud Conformity) will be less noisy and more effective in finding real risks.

Control 2 – Enforce separation of duties: you don’t want one individual to have a toxic combination of role access as they can sidestep too many controls if they are planning something malicious – this can help prevent data exfiltration, or other loss of your information assets.

Once you implement these controls you’ll be able to better provide evidence of compliance through monitoring with CloudTrail and AWS Config or other means. There’s just the process to go through to determine what is appropriate.

2 – IDENTIFY YOUR DATABASE TEAMS AND STAKEHOLDERS AND CRAFT THE USER STORIES

Your DBAs may have a well-defined set of operational duties, or they may slide more towards doing what needs to be done. There may not be all the time in the world to understand all the fine grained access permissions required in the cloud, but many of the ‘old’ duties will go away – tempdb tuning, maintenance plans etc for SQL server are an example, plus of course all operating system tasks such as patching and monitoring – backup teams will also not have a lot to do in the new world, the app team will be more self sufficient in a greater range of operational controls.

Ideally database management will come from a cross-functional team who manage the complete infrastructure stack in the cloud, and there may be a DevOps approach where app dev and operations work together on a complete application stack. Start to break down what level of specialised roles you need to associate with the database and which are the more general duties that don’t require a dedicated DBA-type person.

3 – ALIGN THE USER STORIES WITH PRIVILEGES AND CONSTRUCT THE ACCESS MODEL

To start, we should break down these stories; what are the high-level lifecycle activities and then determine what level of access is required. These can be simply identified as Red or Green access:

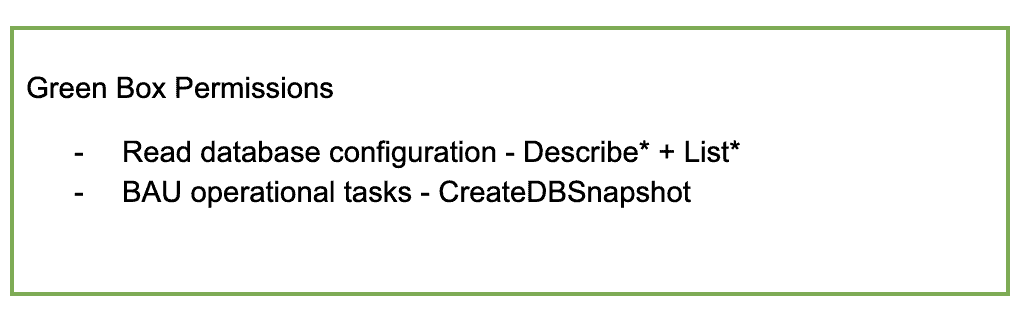

- Green is all the day-to-day operational tasks we agree are low risk and require no particular attention

- Red access are enabling activities that may carry some risk, and the need to control the ability to use and also to have visibility into when they are used.

Let’s begin with two simple questions –

‘when I do this work, will it change the configuration of the database or related AWS infrastructure in such a way as to trigger non-compliance with those configuration items the organisation deems essential’?

‘when I do this work, is it possible for me to exfiltrate data from the system in a way that is not already predetermined by the architecture?’ – e.g. remote dump of a database to an external system or storage location.

If the answer to either of these is Yes, this becomes a Red access level.

Otherwise the access level is Green.

4 – IMPLEMENT THE MODEL

Many of the Green access requirements can then be merged together into one role, and potentially have a broader brush set of Read-Only style permissions attached to them. Examples of potential Green access permissions required may be:

CreateEventSubscription may go into the Green Access box, but DeleteEventSubscription may fall into the Red access layer – this will be informed by the config management boundaries you will establish with database services in the cloud.

This in turn would create a Policy that looks like this:

which we can then attach to a Role that we create called Database-Operator, and attach the policy.

For Red Access scenarios, our policies will use more of the higher risk actions – e.g.

Once these are set up, we can assign them to a Role – such as Database-RED-access, and this can then be used for break-glass access, or be integrated into a Privileged Access Management solution.

We also want to have visibility of when people are making changes, and by enabling CloudTrail with delivery to CloudWatch we can create alarms and notifications via SNS, for all usage of the actions inside the Red Box – giving you the observability into the compliance of your database infrastructure.

With these base level controls in place and a model to identify who needs what access and under which circumstances this access should be used, you can demonstrate control over the databases that are being migrated to the cloud.

As maturity grows in the team and tooling, more of the activities that require access levels from the Red Box should be automated, and driven from a pipeline. As you reduce the frequency of ‘hand crafting’ your cloud infrastructure the configuration will stay truer and exceptions will be easier to find and fix.