When I wrote my data lake demo series (part 1, part 2 and part 3) recently, I used an Aurora PostgreSQL, MSK and EMR cluster. All of them were deployed to private subnets and dedicated infrastructure was created using CloudFormation. Using the infrastructure as code (IaC) tool helped a lot but it resulted in creating 7 CloudFormation stacks, which was a bit harder to manage in the end. Then I looked into how to simplify building infrastructure and managing resources on AWS and decided to use Terraform instead. I find it has useful constructs (e.g. meta-arguments) to make it simpler to create and manage resources. It also has a wide range of useful modules that facilitate development significantly. In this post, we’ll build an infrastructure for development on AWS with Terraform. A VPN server will also be included in order to improve developer experience by accessing resources in private subnets from developer machines.

Architecture

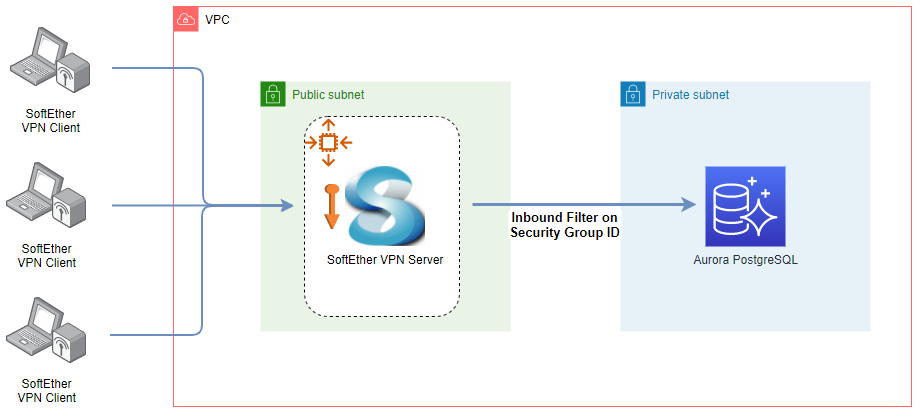

The infrastructure that we’ll discuss in this post is shown below. The database is deployed in a private subnet and it is not possible to access it from the developer machine. We can construct a PC-to-PC VPN with SoftEther VPN. The VPN server runs in a public subnet and it is managed by an autoscaling group where only a single instance will be maintained. An elastic IP address is associated by a bootstrap script so that its public IP doesn’t change even if the EC2 instance is recreated. We can add users with the server manager program and they can access the server with the client program. Access from the VPN server to the database is allowed by adding an inbound rule where the source security group ID is set to the VPN server’s security group ID. Note that another option is AWS Client VPN but it is way more expensive. We’ll create 2 private subnets and it’ll cost $0.30/hour for endpoint association in the Sydney region. It also charges $0.05/hour for each connection and the minimum charge will be $0.35/hour. On the other hand, the SorftEther VPN server runs in the t3.nano instance and its cost is only $0.0066/hour.

Even developing a single database can result in a stack of resources and Terraform can be of great help to create and manage those resources. Also VPN can improve developer experience significantly as it helps access them from developer machines. In this post, it’ll be illustrated how to access a database but access to other resources such as MSK, EMR, ECS and EKS can also be made.

Infrastructure

Terraform can be installed in multiple ways and the CLI has intuitive commands to manage AWS infrastructure. Key commands are

init – It is used to initialize a working directory containing Terraform configuration files.

plan – It creates an execution plan, which lets you preview the changes that Terraform plans to make to your infrastructure.

apply – It executes the actions proposed in a Terraform plan.

destroy – It is a convenient way to destroy all remote objects managed by a particular Terraform configuration.

The GitHub repository for this post has the following directory structure. Terraform resources are grouped into 4 files and they’ll be discussed further below. The remaining files are supporting elements and their details can be found in the language reference.

$ tree |

VPC

We can use the AWS VPC module to construct a VPC. A Terraform module is a container for multiple resources and it makes it easier to manage related resources. A VPC with 2 availability zones is defined and private/public subnets are configured to each of them. Optionally a NAT gateway is added only to a single availability zone.

# vpc.tf module “vpc” { |

Key Pair

An optional key pair is created. It can be used to access an EC2 instance via SSH. The PEM file will be saved to the key-pair folder once created.

# keypair.tf resource “tls_private_key” “pk” { |

VPN

The AWS Auto Scaling Group (ASG) module is used to manage the SoftEther VPN server. The ASG maintains a single EC2 instance in one of the public subnets. The user data script (bootstrap.sh) is configured to run at launch and it’ll be discussed below. Note that there are other resources that are necessary to make the VPN server to work correctly and those can be found in the vpn.tf. Also note that the VPN resource requires a number of configuration values. While most of them have default values or are automatically determined, the IPsec Pre-Shared key (vpn_psk) and administrator password (admin_password) do not have default values. They need to be specified while running the plan, apply and destroy commands. Finally, if the variable vpn_limit_ingress is set to true, the inbound rules of the VPN security group is limited to the running machine’s IP address.

# _variables.tf |

The bootstrap script associates the elastic IP address followed by starting the SoftEther VPN server by a Docker container. It accepts the pre-shared key (vpn_psk) and administrator password (admin_password) as environment variables. Also the Virtual Hub name is set to DEFAULT.

# scripts/bootstrap.sh #!/bin/bash -ex |

Database

An Aurora PostgreSQL cluster is created using the AWS RDS Aurora module. It is set to have only a single instance and is deployed to a private subnet. Note that a security group (vpn_access) is created that allows access from the VPN server and it is added to vpc_security_group_ids.

# aurora.tf

|

VPN Configuration

Both the VPN Server Manager and Client can be obtained from the download centre. The server and client configuration are illustrated below.

VPN Server

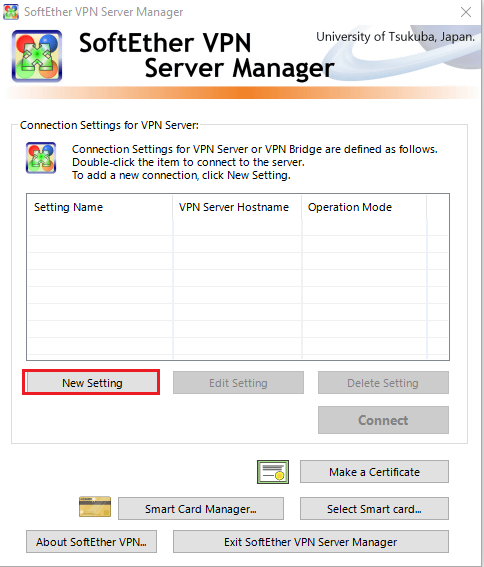

We can begin with adding a new setting.

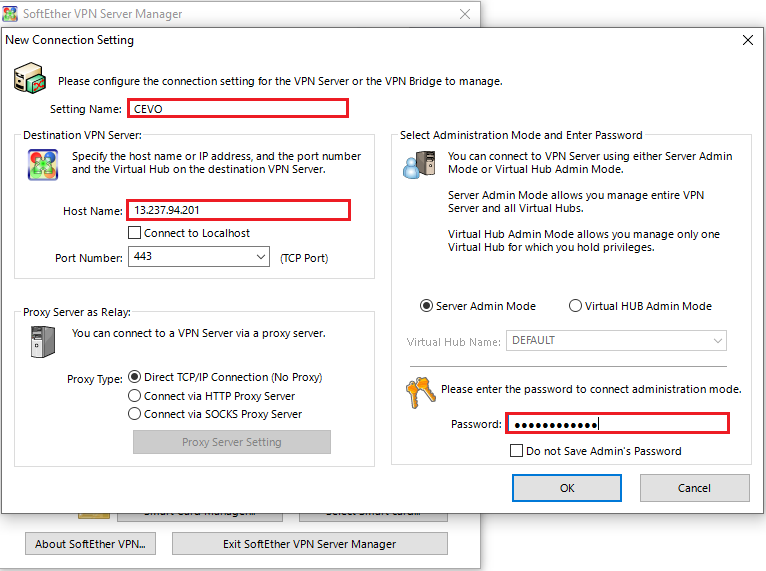

We need to fill in the input fields in the red boxes below. It’s possible to use the elastic IP address as the host name and the administrator password should match to what is used for Terraform.

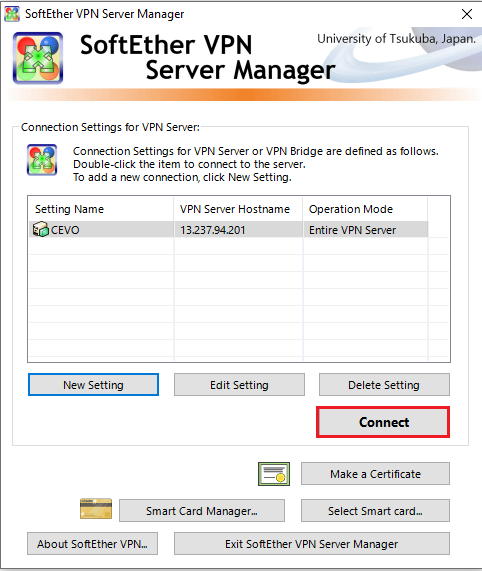

Then we can make a connection to the server by clicking the connect button.

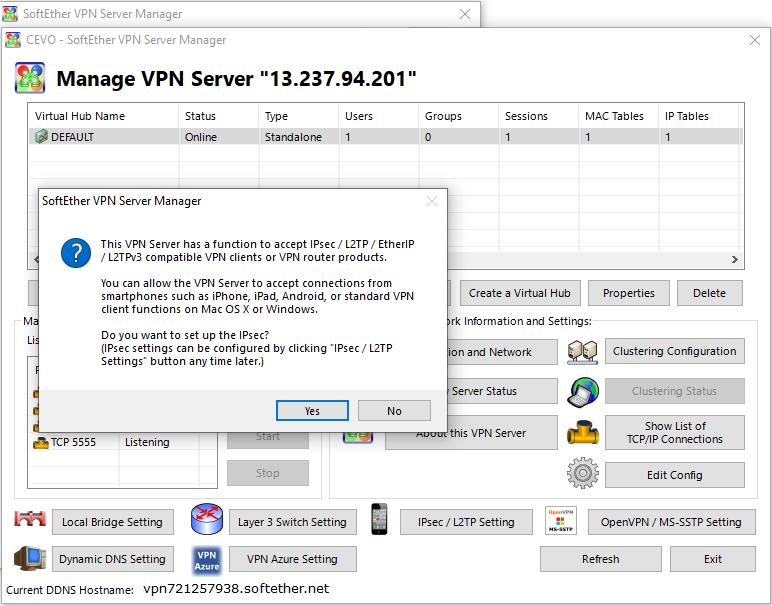

If it’s the first attempt, we’ll see the following pop-up message and we can click yes to set up the IPsec.

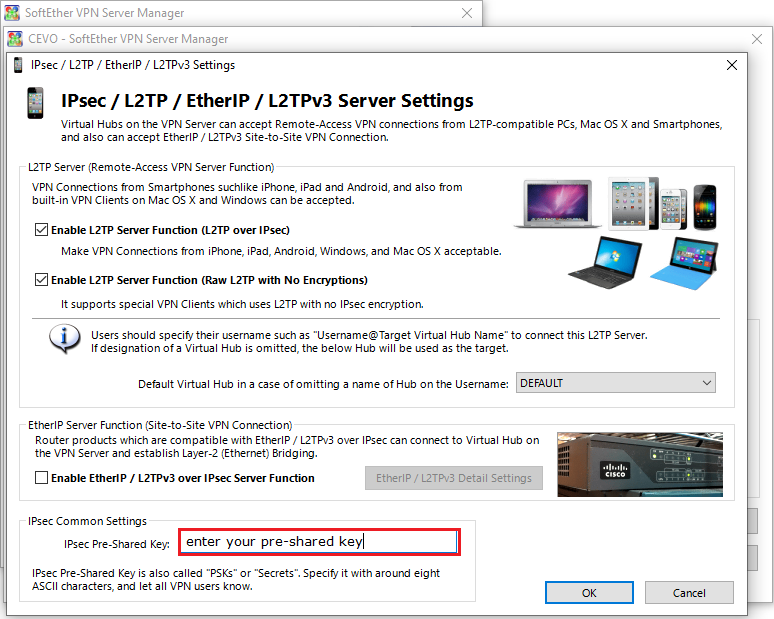

In the dialog, we just need to enter the IPsec Pre-Shared key and click ok.

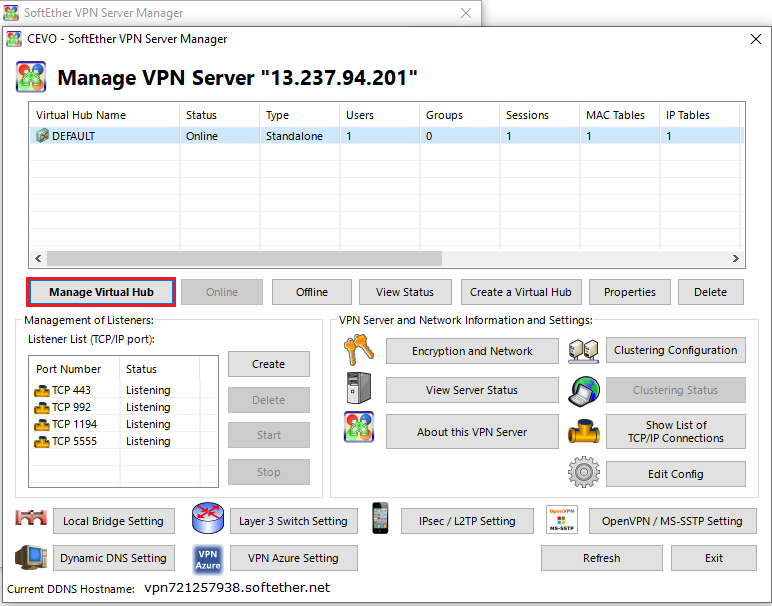

Once a connection is made successfully, we can manage the Virtual Hub by clicking the manage virtual hub button. Note that we created a Virtual Hub named DEFAULT and the session will be established on that Virtual Hub.

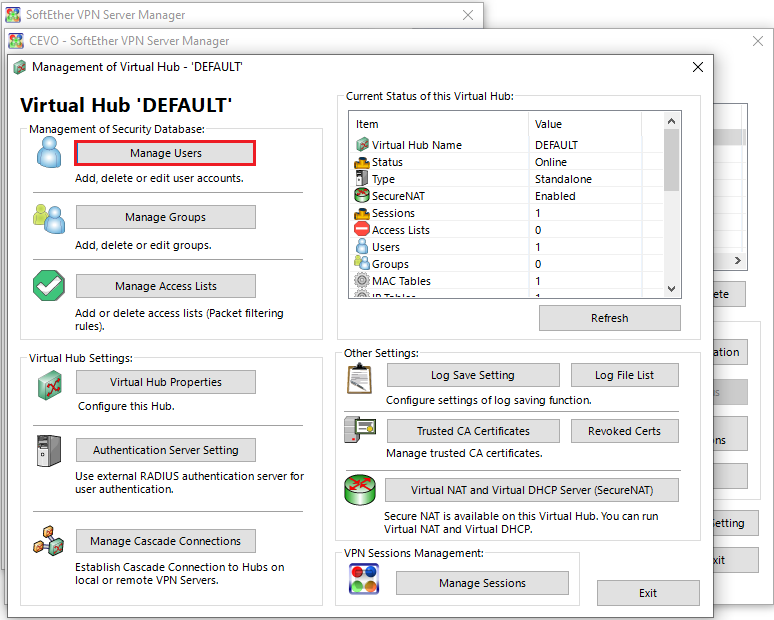

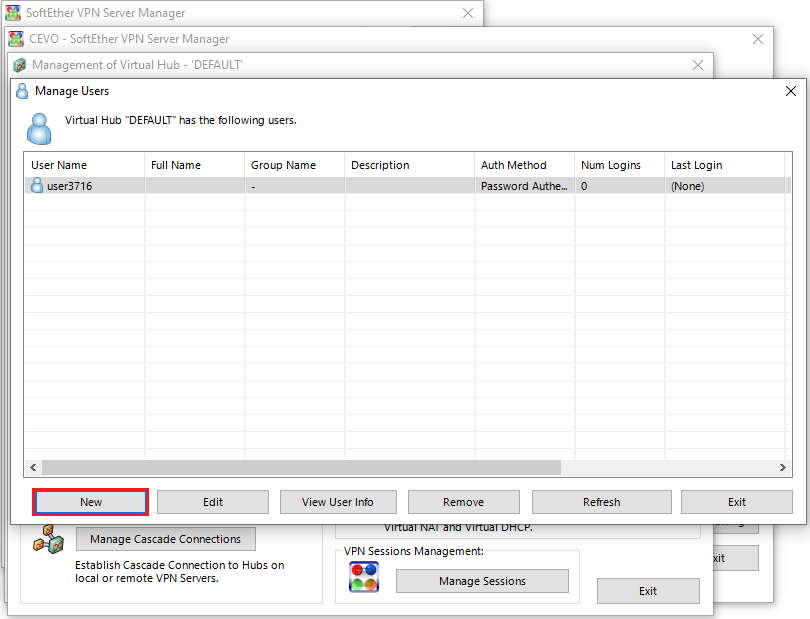

We can create a new user by clicking the manage users button.

And clicking the new button.

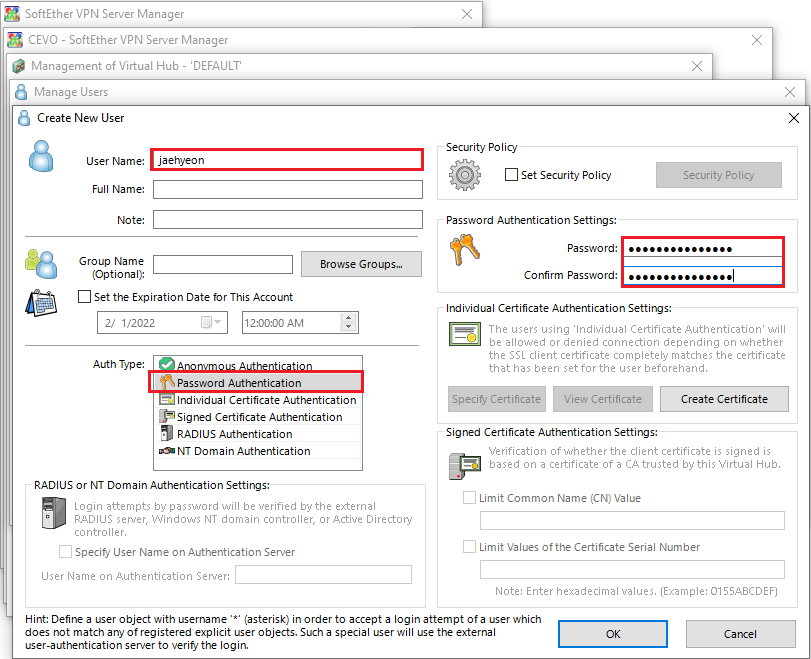

For simplicity, we can use Password Authentication as the auth type and enter the username and password.

A new user is created and we can use the credentials on the client program to make a connection to the server.

VPN Client

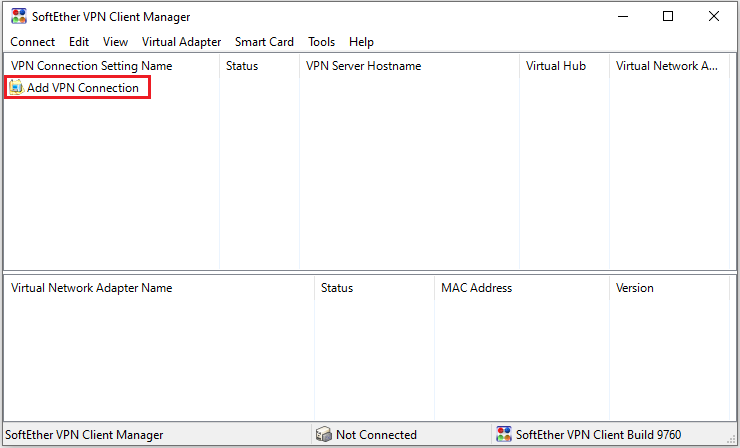

We can add a VPN connection by clicking the menu shown below.

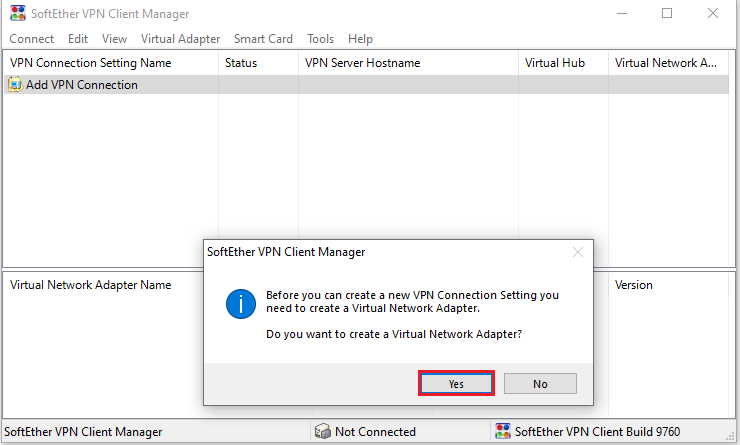

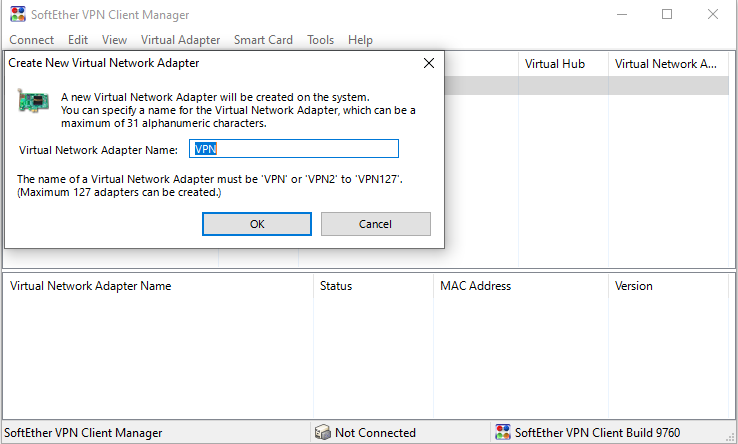

We’ll need to create a Virtual Network Adapter and should click the yes button.

In the new dialog, we can add the adapter name and hit ok. Note we should have the administrator privilege to create a new adapter.

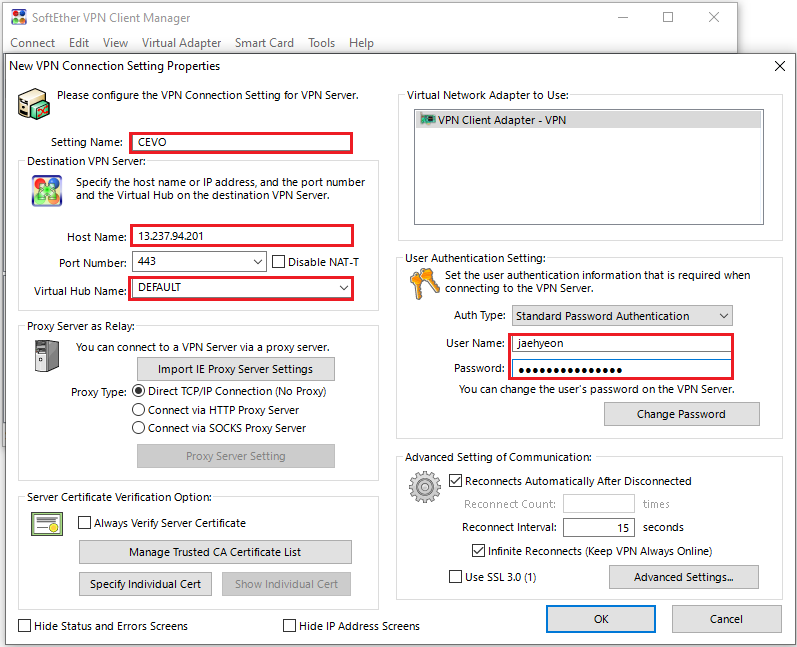

Then a new dialog box will be shown. We can add a connection by entering the input fields in the red boxes below. The VPN server details should match to what are created by Terraform and the user credentials that are created in the previous section can be used.

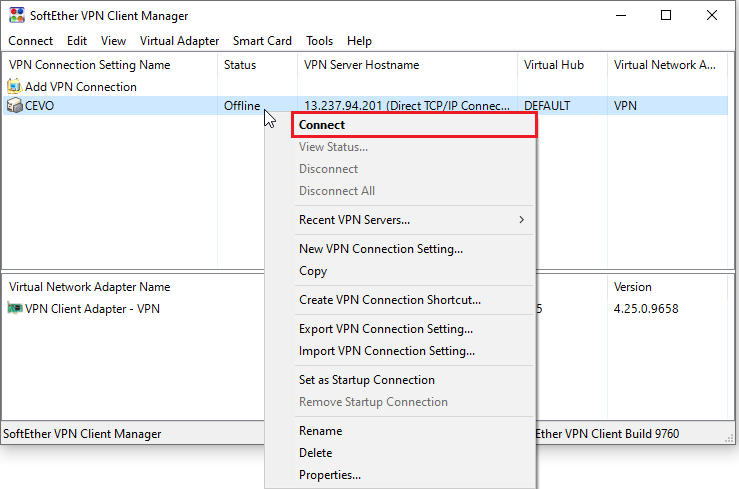

Once a connection is added, we can make a connection to the VPN server by right-clicking the item and clicking the connect menu.

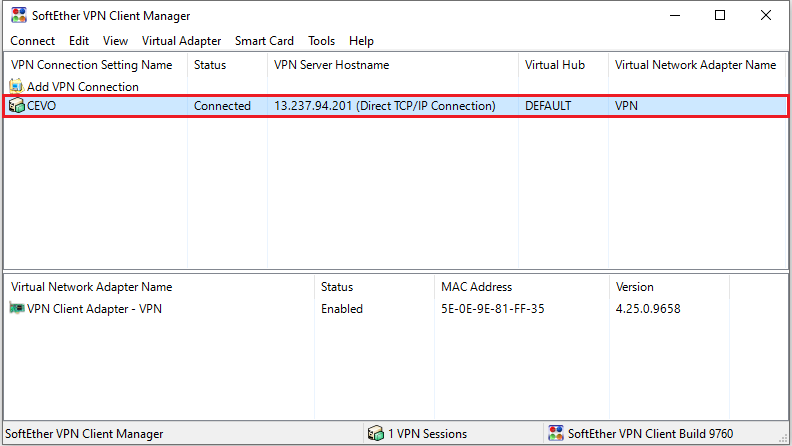

We can see that the status is changed into connected.

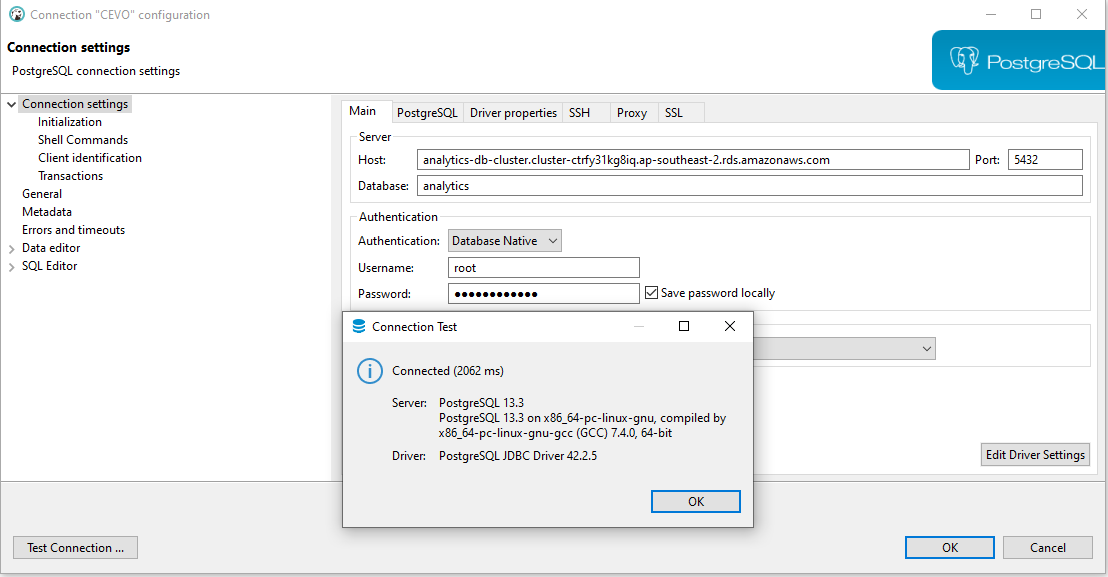

Once the VPN server is connected, we can access the database that is deployed in the private subnet. A connection is tested by a database client and it is shown that the connection is successful.

Summary

In this post, we discussed how to set up a development infrastructure on AWS with Terraform. Terraform is used as an effective way of managing resources on AWS. An Aurora PostgreSQL cluster is created in a private subnet and SoftEther VPN is configured to access the database from the developer machine.