Unit testing is one of the most important skills a developer can learn; it allows them to find and resolve their own mistakes quickly which improves development time threefold.

A common unit testing methodology is Test Driven Development. When done properly, this approach can be very effective, however when done wrong, it could be a roadblock for developers to overcome.

THE LESSON

A few years back, I took the lead of a brand new time and attendance application, and it was here I saw both a right way and wrong way of writing unit tests. This time and attendance application would track an employee’s work times and calculate their entitlement and hours. The calculations were complex, with hundreds of different scenarios. We had to deliver this solution in five weeks and decided to have one week sprints.

I split this system into two major components: the rules engine, which performed the calculations, and the export engine, which exported the results to the payroll team. The rules engine was a hit. It was so fluid and fully unit tested. However, the export system barely held together despite also being fully unit tested.

After retrospectively analysing the time and attendance solution I saw that the successful and fluid rules engine had unit tests only testing the main public method. All the hundreds of tests in this area would interact with this single entry method despite their being many other helper methods in the process. However, the export engine was strictly run TDD on every single public method including smaller helper methods in different classes.

I found that due to unit tests running on the entry method of the rules engine, we were able to change the internal implementations of the engine without worrying that we would break unit tests. As new requirements came in, we could confidently make radical implementation changes as the entry method never changed. In an agile environment running one weeks sprints, this was perfect as it encouraged agile development. We could change our implementation to meet rapidly changing requirements without having to change all our tests!

The payroll export unit tests on the other hand, were written very strict TDD on ALL public helper methods that were only used by higher public methods. As requirements changed or became clearer, it was cumbersome to change the internal implementation as we would break hundreds of units tests, which meant time and effort to fix. We were reluctant to refactor and clean up our code, leading to an accumulation of technical debt that slowed down development in this area.

Not only that, I found duplicate redundant tests. One for the helper methods and then one for the main public function to ensure than everything was tied together. What was the point in this? This violates DRY (don’t repeat yourself).

THE PRINCIPLE

The principle we can takeaway here is that we need to write unit tests in a way that encourages us to develop and refactor. We can do this by writing unit tests that do not lock us into an implementation. This can be achieved by writing unit tests as close as possible to the entrypoint or in other words, higher up the call stack than what is normal.

The conclusion that follows goes against the current norm: unit tests written on helper functions could be seen as a code smell. Do not write unit tests on helper methods as this ties you to an implementation. Helper function code is touched by entrypoint functions* anyway so you will get coverage that way and don’t have to write the same test twice, one for the helper, and one for the entry point.

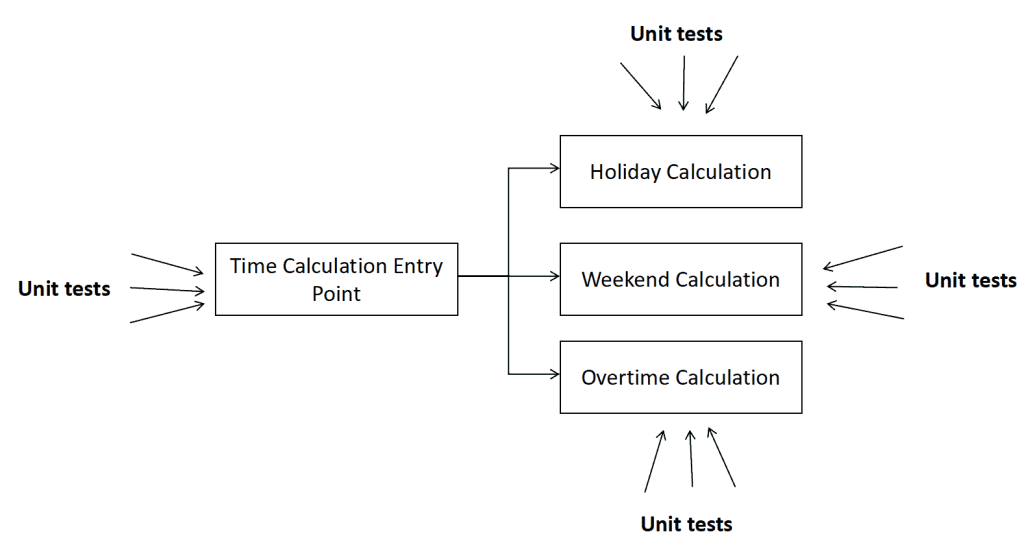

The following diagram illustrates this issue. It shows an entrypoint function for calculating employee worked time. It is a simplified version of the actual calculation engine that was developed. This uses multiple helper functions to achieve the calculation:

(Above) Unit tests on helper methods. This ties you to an implementation. If you change your implementation, you will break your tests.

(Above) Unit tests on entry point methods. This decouples your code from unit tests. You can change your implementation and it’s unlikely that you’ll break your tests.

When looking at these two diagrams, some may say that the first is better because it has more code coverage and more tests. However, you can get the same code coverage from the entry point. Another benefit of this is that the total number of tests are less with the same coverage.

CONCLUSION

Always TDD closest to the entry point function. As a rule, do not write unit tests specifically for helper functions, this could be see as a code smell. Instead, you can test this code from the entrypoint function. By having all unit tests on entrypoint methods, you can change your implementation much more freely. Your unit tests would then encourage you to refactor instead of holding you back.

COMMON COUNTER-THOUGHTS

Isn’t the point of unit testing to be at a function level?

The proposed TDD approach is still executing tests through a function. The function being called is just higher up the call stack. I am not referring to integration tests. These unit tests are still run at code level and the function executions do not leave the application domain. The common view is that unit tests must cover the smallest unit of work. This article is saying that this is an anti pattern and leads to tightly coupled code to unit tests.

Writing unit tests at the functional level allows for specificity, we know exactly where things will break.

You can still be specific in your unit tests that are run near the entrypoint. You may not know exactly which function is failing in your call stack, but that is a small price to pay for increased ability to refactor.

Unit tests that break show that something has changed. This is the point.

What benefit is there in knowing something has changed? The benefit of unit tests is to know that a change hasn’t failed an expected behaviour. By focusing on this alone, our unit tests encourage us to refactor our implementation.

FURTHER READING

Great course on pluralsight about clean unit testing:

https://www.pluralsight.com/courses/csharp-unit-testing-enterprise-applications

Blogs and articles with similar opinions:

https://techbeacon.com/app-dev-testing/no-1-unit-testing-best-practice-stop-doing-it

https://rbcs-us.com/documents/Why-Most-Unit-Testing-is-Waste.pdf

* The phrase “unit testing entrypoint functions or closest to the entry point” is not referring to integration or API tests. We are instead writing our unit tests higher up the call stack closer to the entrypoint. Developers should decide where the best place to write these tests as long as it’s higher up the call hierarchy than the current norm.

Special thanks to my colleagues at Cevo for challenging my views and helping me to refine this post.